Native ZFS for Linux on OpenStack

Native ZFS for Linux on OpenStack

If you followed my post: Installing OpenStack on Debian Wheezy I’ve got a nice add-on here for you by adding Native ZFS for Linux on OpenStack. You will realized a very huge disk speed advantage within your virtual machines.

For my Setup I used two Hard Disks with the following Size:

| Disk | Size | Path |

|---|---|---|

| WDC WD20EARX-00P | 2 TB | /dev/sda |

| WDC WD20EARX-00P | 2 TB | /dev/sdb |

Surely you could also add some more or SDD Disks for extra Caching.

First of all, get you system up to date:

apt-get update && apt-get upgrade -y

Install following utilities/dependencies to your System:

apt-get -y install autoconf libtool git apt-get -y install build-essential gawk alien fakeroot zlib1g-dev uuid uuid-dev libssl-dev parted linux-headers-$(uname -r)

grab the latest source directory of SPL compile and install the compiled .deb packages:

cd /opt git clone https://github.com/zfsonlinux/spl.git cd spl ./autogen.sh ./configure make deb dpkg -i *.deb

check if spl loads correctly by entering:

modprobe spl

now do the same with ZFS source:

cd /opt git clone https://github.com/zfsonlinux/zfs.git cd zfs ./autogen.sh ./configure make deb dpkg -i *.deb

check if zfs is working:

modprobe zfs

now add the init script to your system:

update-rc.d zfs defaults

and reboot your system:

reboot

After reboot we are ready to build our Storage Pool:

zpool create -f -o ashift=12 storage mirror /dev/sdb /dev/sda

And add some tunings:

zfs set compression=on storage zfs set sync=disabled storage zfs set primarycache=all storage zfs set atime=off storage

Important Caution note:

Deduplication feature requires up to 5 GB RAM per Terrabyte Storage Space, so if you cannot afford this amount of exclusive RAM disable dedup by entering:

zfs set dedup=off storage

Reboot your System to make sure that your ZFS Pool persists.

List your Pool created before:

zpool list storage

NAME SIZE ALLOC FREE CAP DEDUP HEALTH ALTROOT

storage 1.81T 20.5G 1.79T 1% 1.00x ONLINE –

If your command line will drop a message with HEALTH Status ‘Online’ like above the pool is ready to serve!

To benefit from the ZFS Pool we have to enable writeback caching (see also updated note)

Since there is no known setting that will support disk cache features via nova.conf we need to do this manually by modifying the VM’s disk config cache sections using

virsh edit yourID

that it looks like:

<driver name='qemu' type='raw' cache='writeback'/>

Shutdown the Compute Tasks and move your existing virtual disks to your newly created Storage Pool /storage

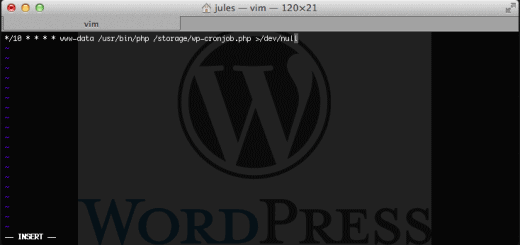

service nova-compute stop mv /var/lib/nova/instances /storage ln -s /storage/instances /var/lib/nova/instances

Reboot your system.

Update Note #1 Publish a new Openstack VM instance in RAW format after following:

Modify your /etc/nova/nova.conf and add:

use_cow_images=false

Restart your Nova-Compute Node then publish your new VM instance.

Reason: I realized that qcow2 (default) disk image format will not benefit from ZFS speed much and since we have compression on ZFS it saves disk space also like qcow2 do.

RAW disk images are in any condition always the prefered fastest!

Update Note #2

I found out that there is a config flag for nova.conf to change the directory name of the instances storage for example:

instances_path=/storage/nova/instances

With that option you can seperate the instance storage directory from the default location /var/lib/nova/instances.

Update Note #3

If ZFS eats your memory and you ran out of it here is my solution: If ZFS eats your memory

Another problem solution

If you encountered a problem that daemons seems fail to start on server boot up,

here is the solution: configure zfs zo start before Openstack

Amazing blog mate

Thanks 😉

Yeah, this guide was awesome.

If you want to snapshot your VM disks with ZFS snapshots, then enabling the second write back mechanism (zfs sync disable is fine) is a bad idea:

1. zfs set sync=disabled storage

2. driver name=’qemu’ type=’raw’ cache=’writeback’

in fact on kvm level you should not used cache=writeback because it is useless if zfs is already caching with the first line, and secondly when making snapshots in zfs your disk snapshots on zfs level will not be consistent at all. Solution: omit the cache=’writeback’ and the system will be as fast (or faster, because no double buffering), but still consistent!

Hi – never set checksum=off because this neuters ZFS’ ability to correct data corruption, which is one of the best features of ZFS.

true that.